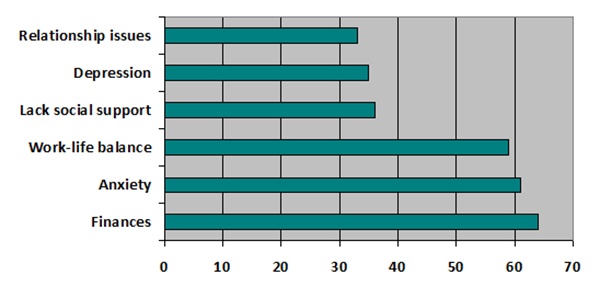

It’s November, and Thanksgiving is here and Christmas/Chanukah is just around the corner. It’s a busy time of the year while completing your degree and dealing with stressors. Clay listed the Top 15 grad school stressors: here are seven stressors by the percentage of students experiencing them. (One thing is see immediately is that you are not alone!)

Manning, Curtis, & McMillen (1999) in their book Stress cite literature and activities to do to combat stress:

- Three good workouts per week (warm-up, stretch, the workout, cool-down, stretch).

- Sleep well (6-1/2 to 8-1/2 hrs per night).

- Meditation (sit in comfortable quiet place, close eyes and be still, breathe deeply, regularly, and slowly).

- Relax (look at Benson’s The Relaxation Response article).

- Eat 21 nutritious meals per week (look at the food guide pyramid).

- Get a massage

- Breathing (full, deep breathes several times a day).

I would add a few more, such as counseling if needed, social support /church/ community, time in nature, improve self-efficacy, have a strong purpose in life, and humor. Here’s a poem:

TAKE TIME

Take time to think…

it is the source of power

Take time to play…

it is the secret of perpetual youth

Take time to be read…

it is the basis of knowledge.

Take time to be friendly…

it is the road to happiness.

Take time to laugh…

it is the music of the soul.

Take time to give…

it is too short a day to be selfish.

Take time to work…

it is the price of success.

I hope this helps and I wish you all a wonderful, peaceful Thanksgiving.

Best regards,

Statistics Solutions Team

When a researcher chooses to create their own survey instrument, it is appropriate to run an exploratory factor analysis to assess for potential subscales within the instrument. However, it is seemingly unnecessary to run an exploratory factor analysis (EFA) on an already established instrument. In the case of an already established instrument, typically, a Cronbach’s alpha is the acceptable way to assess reliability.

Bonferroni Correction is also known as Bonferroni type adjustment

Made for inflated Type I error (the higher the chance for a false positive; rejecting the null hypothesis when you should not)

When conducting multiple analyses on the same dependent variable, the chance of committing a Type I error increases, thus increasing the likelihood of coming about a significant result by pure chance. To correct for this, or protect from Type I error, a Bonferroni correction is conducted.

Bonferroni correction is a conservative test that, although protects from Type I Error, is vulnerable to Type II errors (failing to reject the null hypothesis when you should in fact reject the null hypothesis)

Need help conducting your analysis? Leverage our 30+ years of experience and low-cost same-day service to complete your results today!

Schedule now using the calendar below.

Alter the p value to a more stringent value, thus making it less likely to commit Type I Error

To get the Bonferroni corrected/adjusted p value, divide the original α-value by the number of analyses on the dependent variable. The researcher assigns a new alpha for the set of dependent variables (or analyses) that does not exceed some critical value: αcritical= 1 – (1 – αaltered)k, where k = the number of comparisons on the same dependent variable.

However, when reporting the new p-value, the rounded version (of 3 decimal places) is typically reported. This rounded version is not technically correct; a rounding error. Example: 13 correlation analyses on the same dependent variable would indicate the need for a Bonferroni correction of (αaltered =.05/13) = .004 (rounded), but αcritical = 1 – (1-.004)13 = 0.051, which is not less than 0.05. But with the non-rounded version: (αaltered =.05/13) = .003846154, and αcritical = 1 – (1 – .003846154)13 = 0.048862271, which is in-fact less than 0.05! SPSS does not currently have the capability to set alpha levels beyond 3 decimal places, so the rounded version is presented and used.

Another example: 9 correlations are to be conducted between SAT scores and 9 demographic variables. To protect from Type I Error, a Bonferroni correction should be conducted. The new p-value will be the alpha-value (αoriginal = .05) divided by the number of comparisons (9): (αaltered = .05/9) = .006. To determine if any of the 9 correlations is statistically significant, the p-value must be p < .006.

- Positively worded: e.g., I know that I am welcomed at my child’s school, I feel that I am good at my job, Having a wheelchair helps, etc…Negatively worded: e.g., I feel isolated at my child’s school, I am not good at my job, having a wheelchair is a hindrance, etc…

- Likert scaled responses can vary: e.g., 1 = never, 2= sometimes, 3 = always; OR 1 = strongly disagree, 2 = disagree, 3 = neutral, 4 = agree, 5 = strongly agree

- When creating a composite score from specific survey items, we want to make sure we are looking at the responses in the same manner. If we have survey items that are not all worded in the same direction, we need to re-code the responses. E.g.: I want to make a composite score called “helpfulness” from the following survey items:

- 5-point Likert scaled, where 5 = always 4 = almost always 3 = sometimes, 2 = almost never 1 = never

- 1-I like to tutor at school

- 2-I am usually asked by my friends to help with homework

- 3-I typically do homework in a group setting

- 4-I do not go over my homework with others

In this example, survey items 1 – 3 are all positively worded, but survey item 4 is not. When creating the composite score, we wish to make sure that we are examining the coded responses the same way. In this case, we’d have to re-code the responses to survey item 4 to make sure that all responses for the score “helpfulness” are correctly interpreted; the recoded responses for survey item 4 are: 1 = always, 2 = almost always, 3 = sometimes, 4 = almost never, 5 = never.

Now, all responses that are scored have the same direction and thus, can be interpreted correctly: positive responses for “helpfulness” have higher values and negative responses for “helpfulness” have lower values.

Also, you may wish to change the number of responses. For example, you may wish to dichotomize or trichotomize the responses. In the example above, you can trichotomize the responses by recoding responses “always” and “almost always” to 3 = high, “sometimes” to 2 = sometimes, and “almost never” and “never” to 1 = low. However, please be advised to make sure that you have sound reason to alter the number of responses.

Cox event history is a branch of statistics that deals mainly with the death of biological organisms and the failure of mechanical systems. It is also sometimes referred to as a statistical method for analyzing survival data. Cox event history is also known as various other names, such as survival analysis, duration analysis, or transition analysis. Generally speaking, this technique involves the modeling of data structured in a time-to-event format. The goal of this analysis is to understand the probability of the occurrence of an event. Cox event history was primarily developed for use in medical and biological sciences. However, this technique is now frequently used in engineering as well as in statistical and data analysis.

One of the key purposes of the Cox event history technique is to explain the causes behind the differences or similarities between the events encountered by subjects. For instance, Cox regression may be used to evaluate why certain individuals are at a higher risk of contracting some diseases. Thus, it can be effectively applied to studying acute or chronic diseases, hence the interest in Cox regression by the medical science field. The Cox event history model mainly focuses on the hazard function, which produces the probability of an event occurring randomly at random times or at a specific period or instance in time.

The basic Cox event history model can be summarized by the following function:

h(t) = h0(t)e(b1X1 + b2X2 + K + bnXn)

Where; h(t) = rate of hazard

h0(t) = baseline hazard function

bX’s = coefficients and covariates.

Cox event history can be categorized mainly under three models: nonparametric, semi-parametric and parametric.

Non-parametric: The non-parametric model does not make any assumptions about the hazard function or the variables affecting it. Consequently, only a limited number of variable types can be handled with the help of a non-parametric model. This type of model involves the analysis of empirical data showing changes over a period of time and cannot handle continuous variables.

Semi-parametric: Similar to the non-parametric model, the semi-parametric model also does not make any assumptions about the shape of the hazard function or the variables affecting it. What makes this model different is that it assumes the rate of the hazard is proportional over a period of time. The estimates for the hazard function shape can be derived empirically as well. Multivariate analyses are supported by semi-parametric models and are often considered a more reliable fitting method for use in a Cox event history analysis.

Parametric: In this model, the shape of the hazard function and the variables affecting it are determined in advance. Multivariate analyses of discrete and continuous explanatory variables are supported by the parametric model. However, if the hazard function shape is incorrectly estimated, then there is a chance that the results could be biased. Parametric models are frequently used to analyze the nature of time dependency. It is also particularly useful for predictive modeling, because the shape of the baseline hazard function can be determined correctly by the parametric model.

Cox event history analysis involves the use of certain assumptions. As with every other statistical method or technique, if an assumption is violated, it will often lead to the results being statistically unreliable. The major assumption is that in using Cox event history, with the passage of time, independent variables do not interact with each other. In other words, the independent variables should have a constant hazard of rate over time.

In addition, hazard rates are rarely smooth in reality. Frequently, these rates need to be smoothed over in order for them to be useful for Cox event history analysis.

Applications of Cox Event History

Cox event history can be applied in many fields, although initially it was used primarily in medical and other biological sciences. Today, it is an excellent tool for other applications, frequently used as a statistical method where the dependent variables are categorical, especially in socio-economic analyses. For instance, in the field of economics, Cox event history is used extensively to relate macro or micro economic indicators in terms of a time series; for instance, one could determine the relationship between unemployment or employment over time. In addition, in commercial applications, Cox event history can be applied to estimate the lifespan of a certain machine and break down points based on historical data.

For those who need help for business require statistics and data analysis, presentations, reports, reviews etc, and who are not fully equipped with the resources or required expertise to come up with the material, a statistics consultant acts as their problem solvers. A statistical consultant is one who takes on the task of measuring, interpreting and describing given topics and formulating information or collecting data for clients. Highly popular in various fields, a statistics consultant has confirmed their importance and significance in today’s world. Cutting edge advancements, developments, and competition call for the need of a team of experts willing to unite their minds together and work towards success in all domains.

A statistics consultant is very significant. Constantly busy and on-the-run, a client does not always have time to perform all of the various tasks involved in statistical analysis. A statistics consultant, however, works to provide end-to-end threads of information, data, and facts and figures to the clients. Statistics consultants function in various fields and apply diverse techniques in areas like business, medicine, psychology, law, industry and government. They work effectively to provide precise and accurate results. A statistics consultant acts as an advisor for the clients and counsels them with their findings and researches. Their role differs from project to project. A statistics consultant designs experiments and research studies for the clients. The procedure and technique is determined by the statistical consultant who also conducts research, data analysis, and provides reports and reviews for the clients. When it comes to the businesses concerned, a statistical consultant is reliable and effective at providing exact data and information. A statistical consultant also performs tasks like choosing, constructing and analyzing the tools and methods to be implemented for the provision of quality results to the clients. This way, the statistics consultant ensures the successful working of business operations. A statistics consultant is very precise and clear and utilizes clear-cut methods properly designed to attain the desired results.

A statistics consultant carries a lot of weight and responsibility in the world of business today. One should possess unique communication and verbal skills essential for interaction with clients and should be able to relate to the client cordially. Clients are always a first priority and the statistical consultant is always striving to satisfy them as per their requirements. Besides these communication skills, the statistics consultant has also to possess scientific, statistical and computer skills. Statistical knowledge is necessary to every statistics consultant because a consultant has to employ the required technical skills and designs and implementation. A scientific outlook is absolutely necessary for a statistics consultant. Finally, basic computer skills are absolutely necessary for a statistics consultant because the use of various new statistical software ensures reliable and accurate results. The statistics consultant must therefore acquire and have such traits and skills so that they are able to process and provide adequate data.

A statistics consultant is very beneficial to industries throughout. However, the special advantage lies in the fact that small-scale industries gain added value and can expect increased profits. With a statistics consultant completing the given tasks on time, within budget and fuelled with skilled techniques and methods, small-scale industries (in particular the small ones who would otherwise not have much opportunities) gain tremendously. With professionals handling the research work and required undertakings, chances for a large boost in profit for such industries increase.

A statistics consultant is therefore absolutely necessary in today’s modern era. Providing precise and well defined information and data to their clients, a statistics consultant is invaluable. With a highly skilled team of experts, the horizon and scope of amateurs in the field of statistics broadens, enriching businesses with the best of services as required by the customers. With comprehensive techniques, the statistics consultant is of extreme importance to all bodies. They are proficient and add much value to the businesses.

For more information on statistics consultants, click here.

For the past 20 years Statistics Solutions’ mission is to help graduate students graduate. One of the places students get stuck is with the writing aspects of Capella’s Scientific Merit Review (SMR). Whether you go to Berkeley or Capella, students need help. Students (“learner” always reminded me of Milgram’s 60’s Obedience to Authority study) at Capella however have a couple of things working against them. First, they’re not on campus to get the help they need, and second, they’re paying tuition as the process continues.

Discover How We Assist to Edit Your Dissertation Chapters

Aligning theoretical framework, gathering articles, synthesizing gaps, articulating a clear methodology and data plan, and writing about the theoretical and practical implications of your research are part of our comprehensive dissertation editing services.

- Bring dissertation editing expertise to chapters 1-5 in timely manner.

- Track all changes, then work with you to bring about scholarly writing.

- Ongoing support to address committee feedback, reducing revisions.

My staff and I have worked with over 2,000 graduate students, and despite the resources at the universities, some still need help from an objective, non-evaluative professional. We are such professionals! When we work with students they typically get stuck in the same few places: research questions, proposed data analysis, and the target population and participation selection.

Research questions are easy to handle: make sure the constructs (your measures) are obtainable and measure what you want to measure, AND you arrange these constructs in statistical language. For example, if you have constructs A and B, and want to relate them (read “correlate” them), then say that. If you are assessing whether A predicts B (read “regression”), then say predict, impact, or account for variability in B.

Capella’s Scientific Merit Review also asks for a data plan. When it comes to data analysis plans, these plans are based on two things: the statistical language you used in the research questions and the level of measurement of your variables. We have resources on our website or if you need more one-on-one help you can go to click here. By the way, Capella will send you back for a round of revisions (tuition not included) if you don’t have this correct. When I went to school 100 years ago, the IRB which we would have sent our SMR to, made sure that we didn’t hurt our participants but now they look at everything. And let’s face it, the revision costs you both time and money.

Sample size is typically trickier still (even with the help of G-power). There are two tricks: selecting the right analysis (see data plan above) and selecting the effect size. Effect size can be derived by looking at past research using these constructs and analyses, then calculating or seeing the effect size used. There’s also a realistic aspect too: for dissertations—and I’ve seen 1000’s of them—large and medium effect sizes, requiring relatively small sample (under 100 participants), is the norm. Requesting a small effect size (small effect take a lot of people to detect) requires typically 300-500 participants—and this is just not reasonable for a dissertation student to obtain. Here are a couple of resources (sample size tool; power analysis) to get you started. I should note that the exception is when you are conducting EFA, CFA, path analysis, and structural equation modeling; these techniques typically require 150 or more participants.

I’m going to leave you with a Dissertation Template to look at. It’s free and you may find some definition of terms helpful.

Good luck with your Scientific Merit Review and call us if you run into trouble. Contact us at by clicking here or call us at (877) 437-8622 (M-F, 9-5 EST)

PS: A Stanford Ph.D. student just called; their private stats consultant just took another job. See, everybody needs help sometimes, even schools with lots of resources!

“To a worm in horseradish, the whole world is horseradish”

–Isaac Bashevis Singer

The horseradish phrase was shared by Malcolm Gladwell when talking about Howard Moskowitz, a market researcher, who really got into his research on horizontal segmentation of spaghetti sauce. (For a really entertaining TED talk, click here). For my part, I’ve been thinking a lot about segmentation analysis for the past several weeks. Segmentation is a process of grouping things or people together who have similar attributes, while things or people in different groups are maximally dissimilar. For example, auto insurance purchasers my fall into several segments: cost conscious, brand loyalists, and internet buyers. In each of these groups the members are very similar (e.g., the cost conscious purchasers may tend to be young, have ample time to shop, and may be high risk drivers; brand loyalists may be older, have been with their agent for years, and appreciate the relationship they have with their agent, etc). Now, everywhere I go, I cannot help but think about how and why things are grouped together. Not only do I see the groupings, I see the messages that advertisers are using to speak to that group—a world of horseradish.

I don’t think I’m alone here. Most people have had the experience when purchasing a car, you somehow become aware of your exact car and color all around you. Of course, there are not more of “your” cars out there on the road, but our awareness has changed. But how did we miss that information before? And what are we currently missing out on or filtering out?

This kind of tunnel awareness can happen in this final stage of any research. While working on a dissertation or thesis, the whole world can feel like only a dissertation or thesis project. Our awareness is primed, our attention set. So I’m here to share with you that you may be in that horseradish, it likely not your first, and its likely not your last. So, take a deep breath and look around—there are many more horseradish plants to sample after the dissertation or thesis is finished!

Steven Covey passed away this past month and so I dedicate this newsletter to his teachings. Covey is most famous for writing The 7 Habits of Highly Effective People. In addition to his 7 Habits, he spoke passionately about being a leader, private victories, and other topics related to the choices we make in our lives. One of the important teachings was a concept borrowed from Victor Frankel, in his words, “in between stimulus and response there is a space, and in that space lay our freedom to respond and our happiness.” (Click here for an inspirational Frankel Video). Covey then added that, “we have unique human endowments as humans—self-awareness, conscience, independent will, and imagination.” Further, he said “we are not the product of our past; rather, we have the power to choose.” So, in that space, we can apply these endowments. To listen to an entertaining psychologist’s perspective as to why we make bad decisions, watch Dan Gilbert.

With all of your competing demands, what are your priorities? What are most important tasks for you to complete? Everyone has demands on you—your boss, your children and spouse, community commitments. Covey would say that you are responsible for designing your life—“you are the programmer” (Habit 1), now write the program (Habit 2), and live the program (Habit 3). Regarding the writing of your program, listen to a smart Harvard psychologist speak about how making lists saves lives.

We have the ability to choose our priorities, our reactions to the past, and in the final analysis, we are, as Sartre has said, “condemned to freedom.” In your calendar, schedule dissertation time, family and work commitments, and exercise. Make appointments with yourself—and keep them!

As many of you will be graduating this August, I say congratulations! For those that are planning to finish soon, get the support you need and get that darn dissertation or thesis finished, I can be personally reached at: [email protected]. There is a whole lot of learning and joy that happens after graduation. I’ll leave you with a quote from Sartre:

“Man is not the sum of what he has already, but rather the sum of what he does not yet have, of what he could have.”

To all the Covey’s lovers out there, keep practicing those Habits.

The best to you and your family,

James Lani, Ph.D.

Statistics Solutions