Understanding Factor Analysis: A Comprehensive Overview

What is the Factor Analysis?

Researchers use factor analysis, a sophisticated statistical method, to group and reduce a large set of variables into fewer, more manageable factors or dimensions. This technique helps uncover latent variables or constructs that researchers cannot directly observe but infer from the relationships among observed variables. The primary aim of factor analysis is to simplify complex data sets, making them easier to interpret and utilize, particularly in scenarios requiring fewer input variables, such as in regression models.

Key Applications and Techniques of Factor Analysis

Data Simplification and Dimension Reduction: Factor analysis allows researchers to condense numerous individual items into a smaller set of dimensions. This dimension reduction is particularly useful in data simplification, enhancing the clarity and efficiency of subsequent analyses by focusing on essential underlying factors instead of a plethora of individual variables.

Rotation Methods in Factor Analysis: Once factors are extracted, they are often rotated to achieve a simpler, more interpretable structure. There are several rotation methods, including both orthogonal and oblique rotations. Orthogonal rotations, such as Varimax, maintain the factors as uncorrelated, addressing potential issues of multicollinearity in regression analyses. Oblique rotations allow for correlations between factors, which can be more realistic but also more complex to interpret.

Verification of Scale Construction: Factor analysis is instrumental in scale validation and construction. In confirmatory factor analysis, a subtype of factor analysis, researchers specify which items should load on which factors based on theoretical expectations. This method is frequently employed to validate the structure of scales, such as the Big Five personality traits, ensuring that the data fits a predetermined factor structure.

Need help conducting your Factor Analysis? Leverage our 30+ years of experience and low-cost same-day service to complete your results today!

Schedule now using the calendar below.

- Practical Implementation: Constructing Indices through Factor Analysis

- Index Construction: Beyond reducing dimensions and validating scales, factor analysis is commonly used to construct indices. Traditional index construction might involve summing scores of all items. Factor analysis identifies which items contribute most to a construct, refining indices for a more nuanced approach. For example, in questionnaire development, factor analysis helps omit certain questions, streamlining the instrument while maintaining its psychometric integrity.

- Factor Analysis with Examples:

- Educational Research: In education, factor analysis identifies factors like motivation and support. It links these to student performance, based on behaviors and attitudes.

- Marketing Research: Marketers use factor analysis to group consumer preferences and behaviors, creating targeted strategies for specific segments.

- Psychological Assessment: Psychologists use factor analysis to refine diagnostic tools, ensuring tests measure distinct psychological traits accurately.

Conclusion

Factor analysis serves as a powerful tool in data analysis, capable of uncovering hidden structures in complex datasets. It simplifies data, verifies scales, and helps construct indices, aiding researchers in making informed decisions. It is invaluable for researchers, handling large data sets and adapting across fields to derive meaningful conclusions.

The Factor Analysis in SPSS

The research question we want to answer with our exploratory factor analysis is:

What are the underlying dimensions of our standardized and aptitude test scores? That is, how do aptitude and standardized tests form performance dimensions?

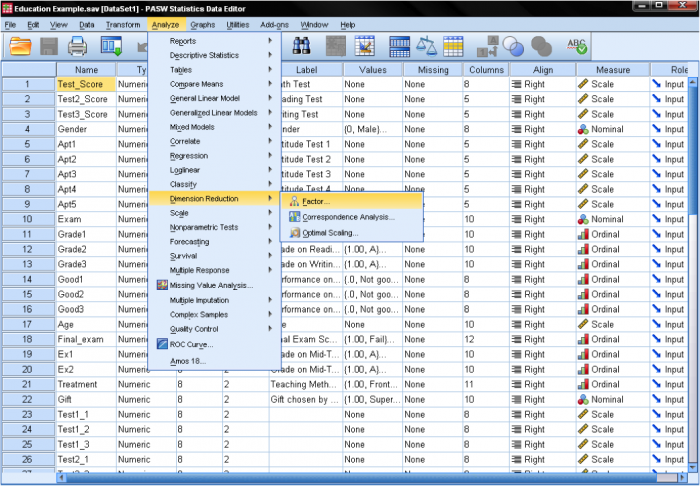

The factor analysis can be found in Analyze/Dimension Reduction/Factor…

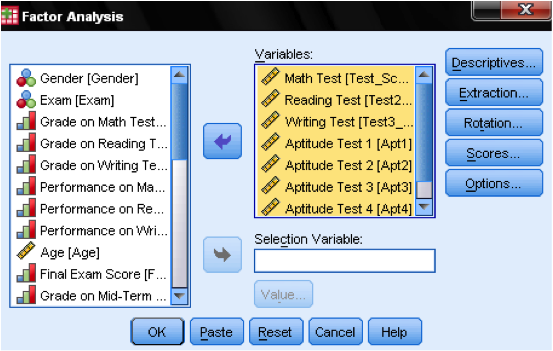

In the dialog box of the factor analysis we start by adding our variables (the standardized tests math, reading, and writing, as well as the aptitude tests 1-5) to the list of variables.

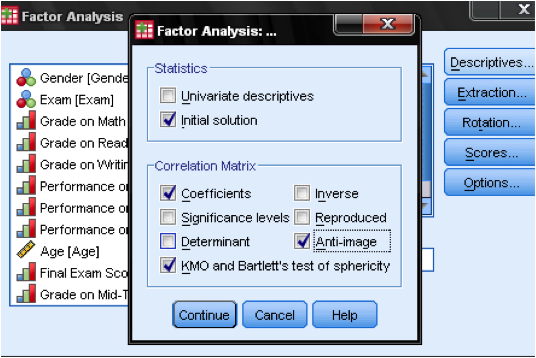

In the “Descriptives” dialog, we add a few statistics to verify the assumptions of factor analysis. To verify assumptions, we use the KMO test of sphericity and the Anti-Image Correlation matrix.

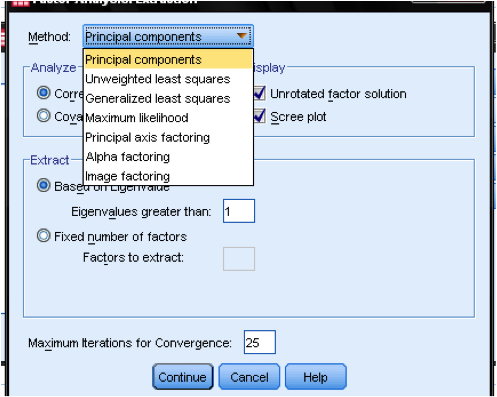

The “Extraction” dialog box lets us specify the extraction method and set the cut-off value for extraction. Generally, SPSS can extract as many factors as variables. In exploratory analysis, eigenvalues help determine the number of factors to retain. A cutoff value of 1 is commonly used.

Next, select an extraction method. Principal components is the default in SPSS. It extracts uncorrelated linear combinations, with the first factor explaining the most variance. Subsequent factors explain less and are uncorrelated. This method reduces data, but is not ideal for identifying latent constructs.

The second most common extraction method is principal axis factoring. This method is appropriate when attempting to identify latent constructs, rather than simply reducing the data. In our research question, the interest is in the dimensions behind the variables. Therefore we are going to use principal axis factoring.

The next step is to select a rotation method. After extracting the factors, SPSS can rotate the factors to better fit the data. The most commonly used method is varimax. Varimax is an orthogonal rotation method that tends produce factor loading that are either very high or very low, making it easier to match each item with a single factor. If one desires non-orthogonal factors (i.e., factors that can correlate), a direct oblimin rotation is appropriate. Here, we choose varimax.

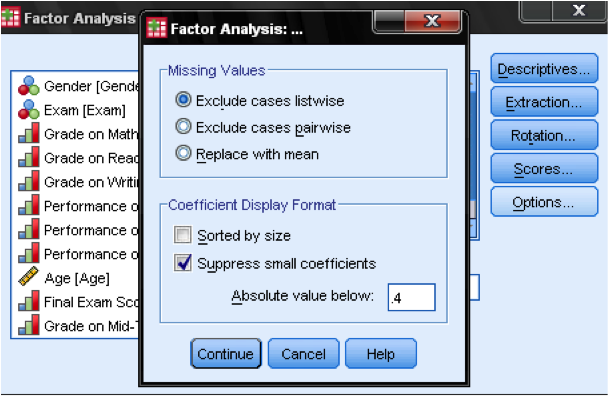

In the dialog box Options we can manage how missing values are treated – it might be appropriate to replace them with the mean, which does not change the correlation matrix but ensures that we do not over penalize missing values. Also, we can specify in the output if we do not want to display all factor loadings. The factor loading tables are much easier to read when we suppress small factor loadings. Default value is 0.1, but in this case, we will increase this value to 0.4. The last step would be to save the results in the Scores… dialog. This automatically creates standardized scores representing each extracted factor.