Conduct and Interpret a Multiple Linear Regression

Understanding Multiple Linear Regression

Multiple linear regression is a staple in the world of statistical analysis, serving as a powerful tool for predicting and understanding the relationship between one outcome variable and two or more predictor variables. It’s like piecing together a puzzle where you’re trying to figure out how different factors come together to influence a specific result.

The Essence of Multiple Linear Regression

At its core, multiple linear regression involves drawing a line through a multi-dimensional space of data points, aiming to best represent the relationship between the dependent (outcome) variable and the independent (predictor) variables. While it might sound complex, think of it as finding the best path through a forest that connects various points of interest—the path represents the predicted outcomes based on different starting conditions.

Stages of Multiple Linear Regression Analysis

- Analyzing Data: Before anything, we look at the data to see how the variables relate to each other. This involves checking for patterns or directions in the data, which can often be visualized through scatter plots.

- Estimating the Model: Here, we fit the line—or in more complex cases, a multi-dimensional plane—to the data. This step is about finding the formula that best predicts the outcome based on the predictors.

- Evaluating the Model: Finally, we assess how good our model is. Does it make accurate predictions? Is it useful for the questions we’re trying to answer?

Regression help?

Option 1: User-friendly Software

Transform raw data to written interpreted results in seconds.

Option 2: Professional Statistician

Collaborate with a statistician to complete and understand your results.

Uses of Multiple Linear Regression

- Causal Analysis: Understanding how changes in predictor variables cause changes in the outcome.

- Forecasting Effects: Predicting how adjustments to predictor variables will affect the outcome.

- Trend Forecasting: Using the model to predict future values and trends based on current data.

Multiple Linear Regression in Practice

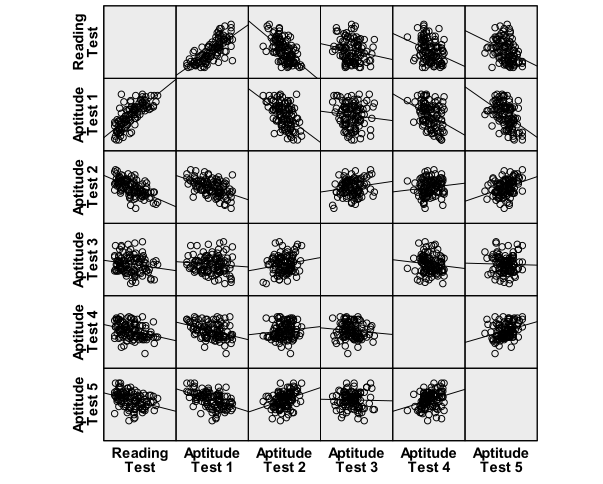

Consider a study aiming to predict students’ reading scores on a standardized test based on five different aptitude tests. The first step is to ensure a linear relationship between the aptitude tests (predictor variables) and the reading scores (outcome variable). This is typically done by examining scatter plots for each predictor against the outcome, which can be easily generated in statistical software like SPSS through the Graphs menu.

In SPSS:

To tackle our research question—can we predict a student’s reading score based on their performance in five aptitude tests?—we start by visually inspecting the relationship between these variables using scatter plots. This can be done individually for each predictor or collectively using a matrix scatter plot feature found under Graphs/Legacy Dialogs/Scatter/Dot in SPSS. This visual inspection is crucial for confirming that our data meets the assumptions for multiple linear regression, setting the stage for a meaningful analysis.

In essence, multiple linear regression is about understanding and predicting the complex interplay between various factors and an outcome. By methodically analyzing, estimating, and evaluating, it provides a window into the causal relationships and trends that define our world.

The scatter plots indicate a good linear relationship between writing score and the aptitude tests 1 to 5, where there seems to be a positive relationship for aptitude test 1 and a negative linear relationship for aptitude tests 2 to 5.

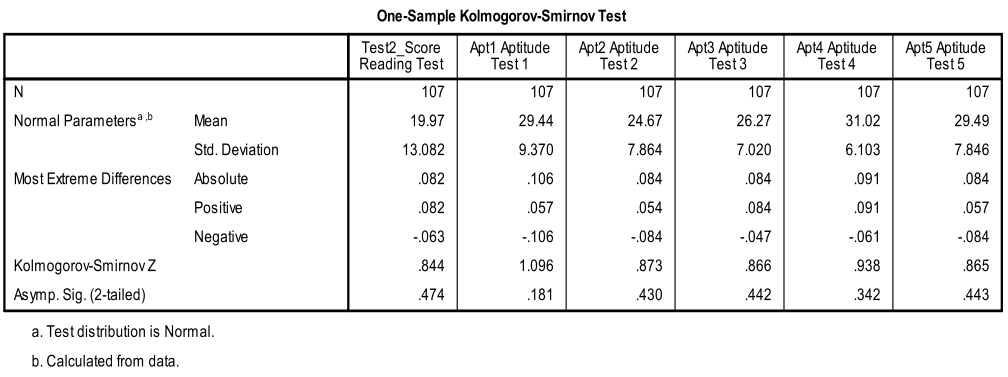

Secondly, we need to check for multivariate normality. This can either be done with an ‘eyeball’ test on the Q-Q-Plots or by using the 1-Sample K-S test to test the null hypothesis that the variable approximates a normal distribution. The K-S test is not significant for all variables, thus we can assume normality.

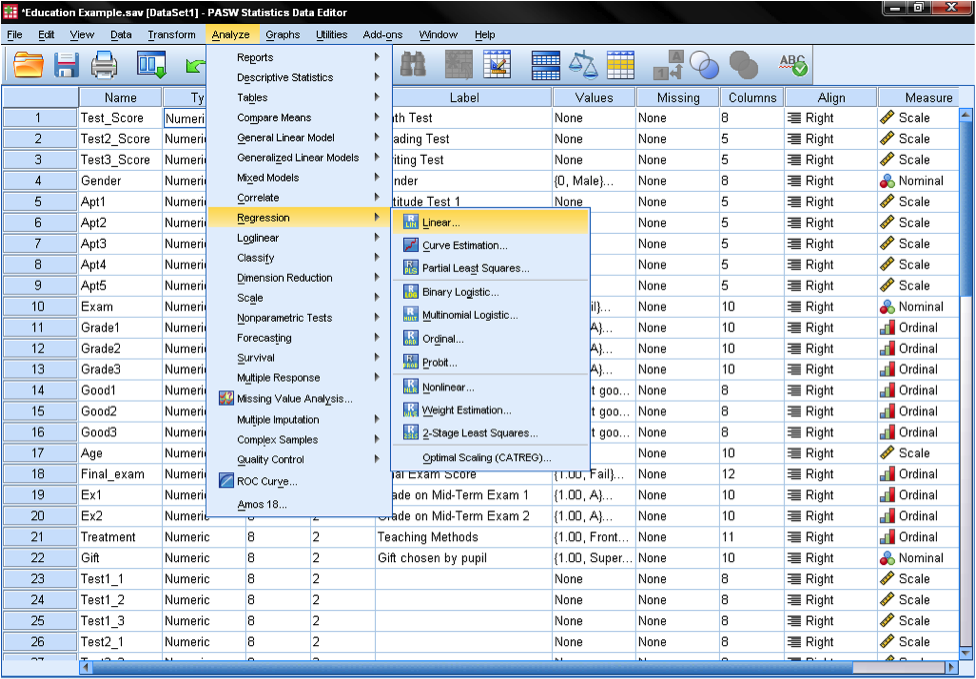

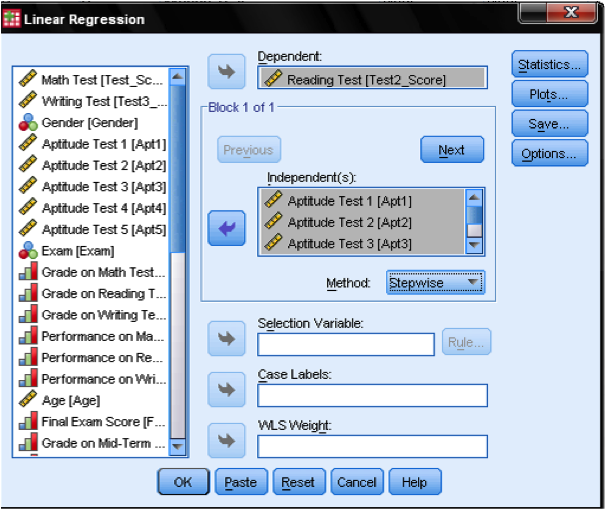

Multiple linear regression is found in SPSS in Analyze/Regression/Linear…

To answer our research question we need to enter the variable reading scores as the dependent variable in our multiple linear regression model and the aptitude test scores (1 to 5) as independent variables. We also select stepwise as the method. The default method for the multiple linear regression analysis is ‘Enter‘, which means that all variables are forced to be in the model. But since over-fitting is a concern of ours, we want only the variables in the model that explain additional variance. Stepwise means that the variables are entered into the regression model in the order of their explanatory power.

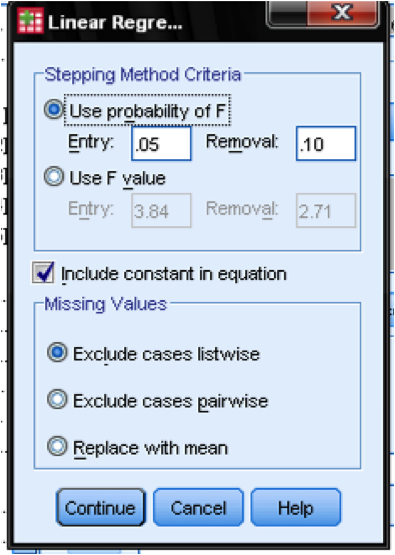

In the field Options… we can define the criteria for stepwise inclusion in the model. We want to include variables in our multiple linear regression model that increase F by at least 0.05 and we want to exclude them again if the increase F by less than 0.1. This dialog box also allows us to manage missing values (e.g., replace them with the mean).

The dialog Statistics… allows us to include additional statistics that we need to assess the validity of our linear regression analysis. Even though it is not a time series, we include Durbin-Watson to check for autocorrelation and we include the collinearity that will check for autocorrelation.

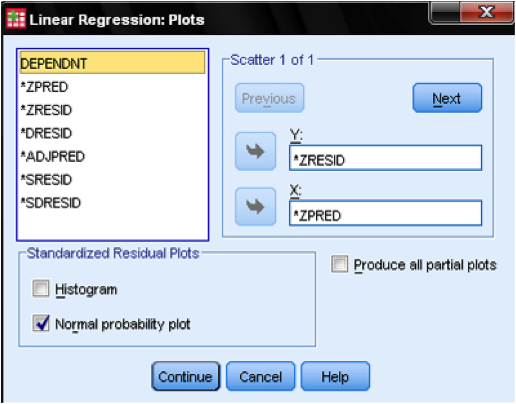

In the dialog Plots…, we add the standardized residual plot (ZPRED on x-axis and ZRESID on y-axis), which allows us to eyeball homoscedasticity and normality of residuals.